Related tools

ODesign

All-atom generative AI for designing protein binders. Specify target binding sites and generate diverse binding proteins with fine-grained control over interaction parameters.

ProFam

ProFam-1 is a protein family language model for family-conditioned sequence generation. Provide a protein family FASTA/MSA and generate new sequences with model likelihood scores for downstream ranking and screening.

EvoDiff

EvoDiff is a diffusion-based protein sequence generation framework from Microsoft Research. ProteinIQ currently wraps the EvoDiff-Seq OA_DM_38M model for unconditional protein generation, motif scaffolding, and user-sequence inpainting.

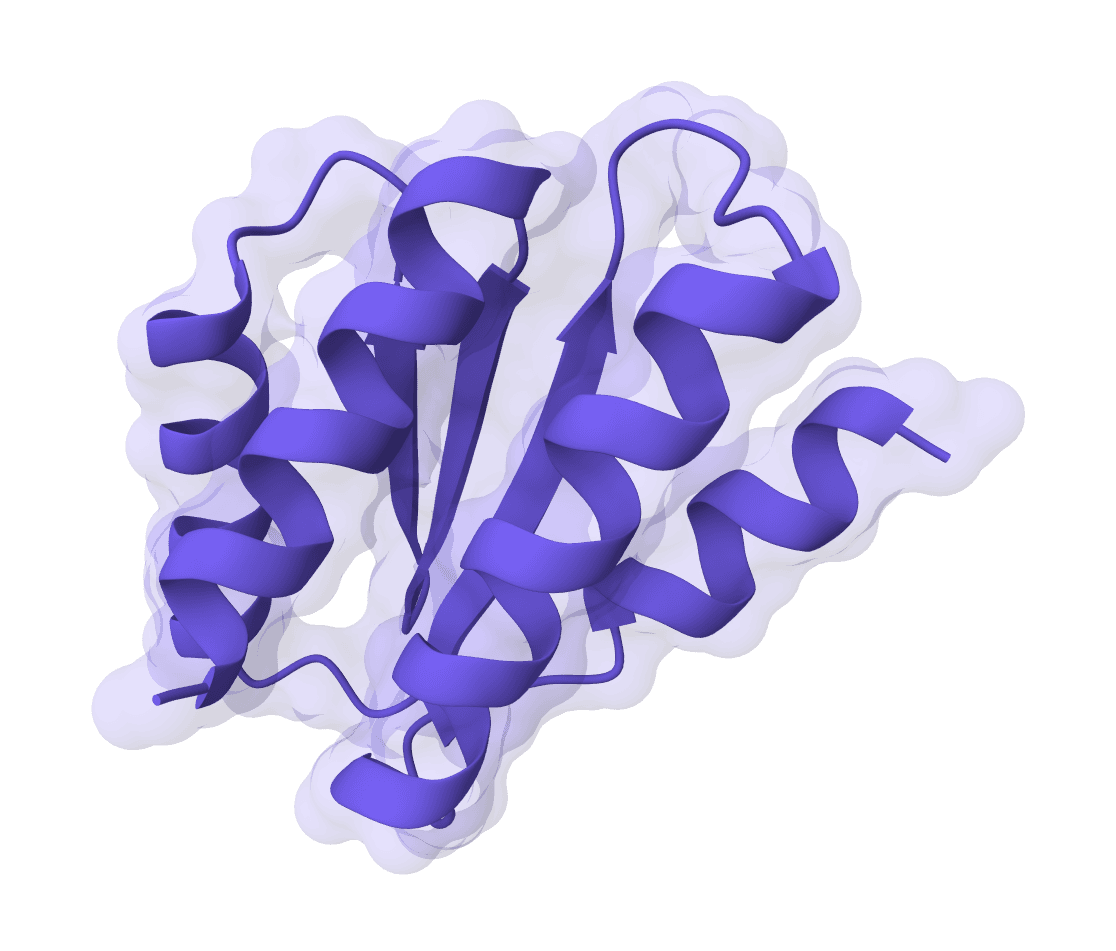

PocketFlow

PocketFlow is a structure-based molecular generative model that designs novel drug-like molecules within protein binding pockets. It uses autoregressive flow modeling with chemical knowledge to generate 100% chemically valid, highly drug-like compounds.

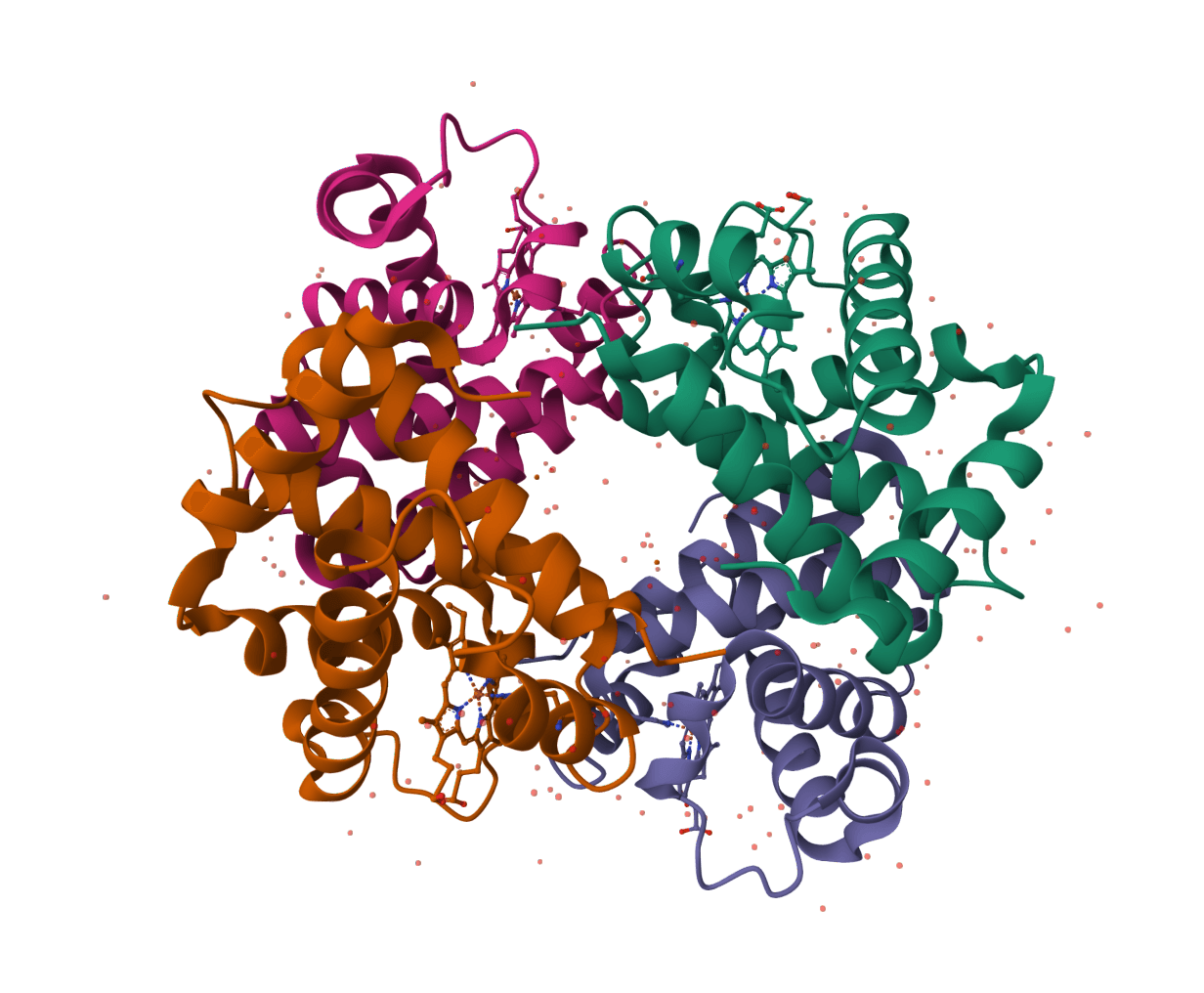

RFdiffusion

RFdiffusion is a state-of-the-art protein structure generation tool that uses diffusion models to design proteins de novo, create binders, scaffold motifs, and generate symmetric oligomers with atomic precision.

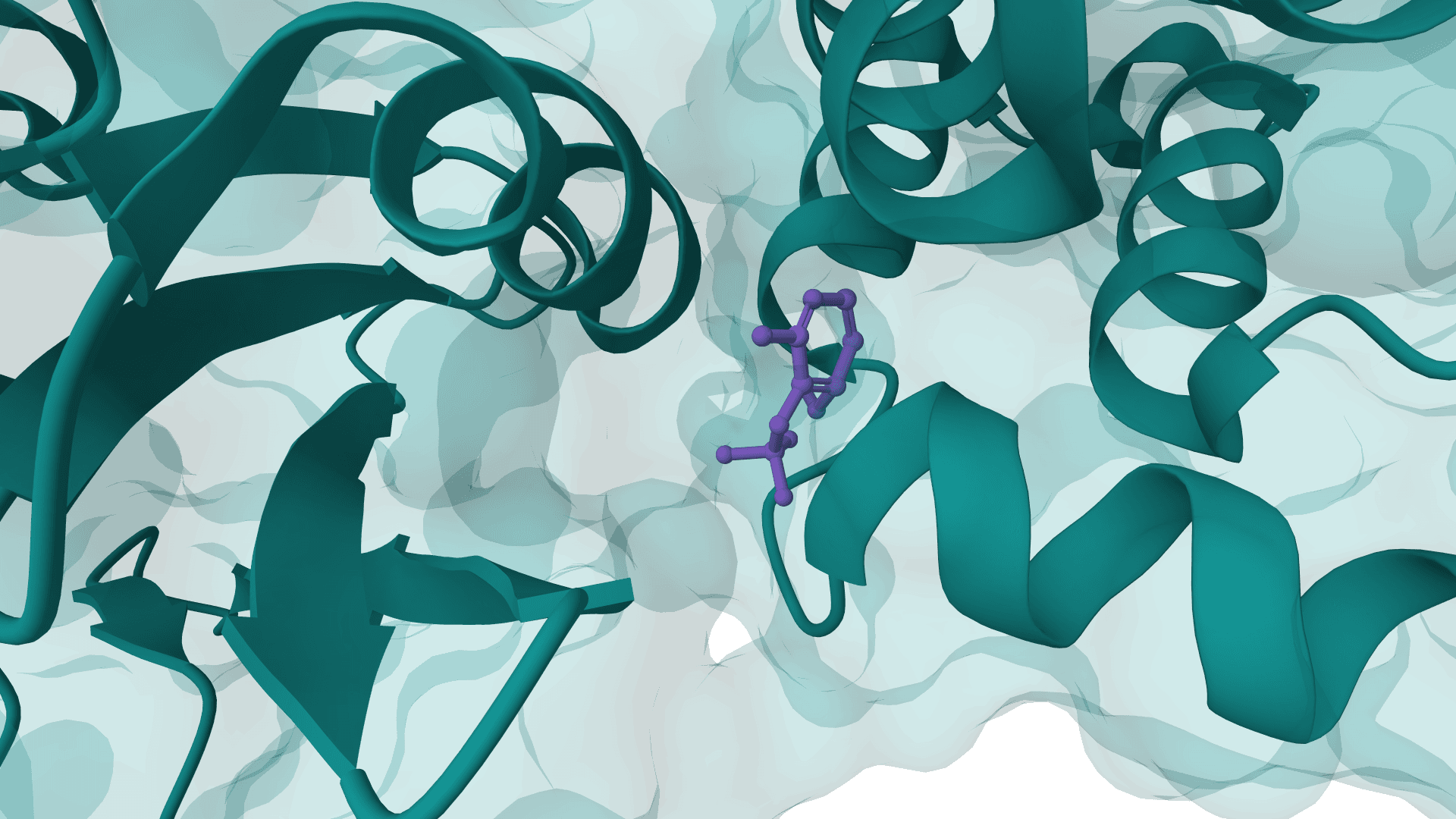

RFdiffusion 2

RFdiffusion2 is an atom-level enzyme active site scaffolding tool that generates protein scaffolds around your input motif. REQUIRES an input PDB structure containing the active site residues to scaffold. For ligand-aware design, ligands must be embedded in the input PDB as HETATM records.

DiffAb

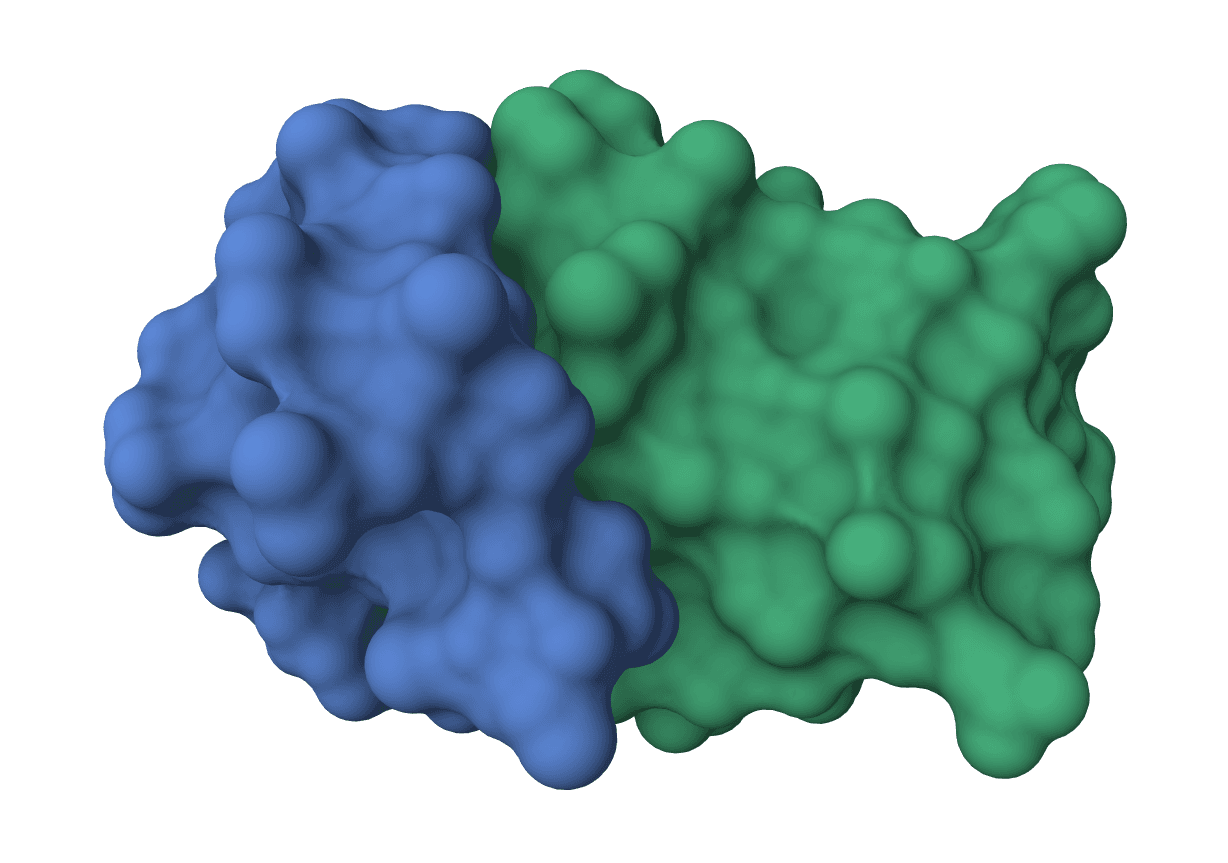

AI-powered antibody CDR design using equivariant diffusion models. Generates optimized complementarity-determining region (CDR) sequences and structures for antibodies targeting specific antigens. Supports single CDR, multi-CDR co-design, and fixed-backbone sequence design modes.

PepMLM

Design linear peptide binders for target proteins using a target sequence-conditioned masked language model. PepMLM generates peptide sequences optimized to bind specific protein targets based on ESM-2 protein language modeling.

BoltzGen

BoltzGen is a state-of-the-art AI model for designing protein and peptide binders against any biomolecular target. Using generative diffusion models, it creates novel binders (proteins, peptides, nanobodies) with nanomolar-level binding affinity.

RFantibody

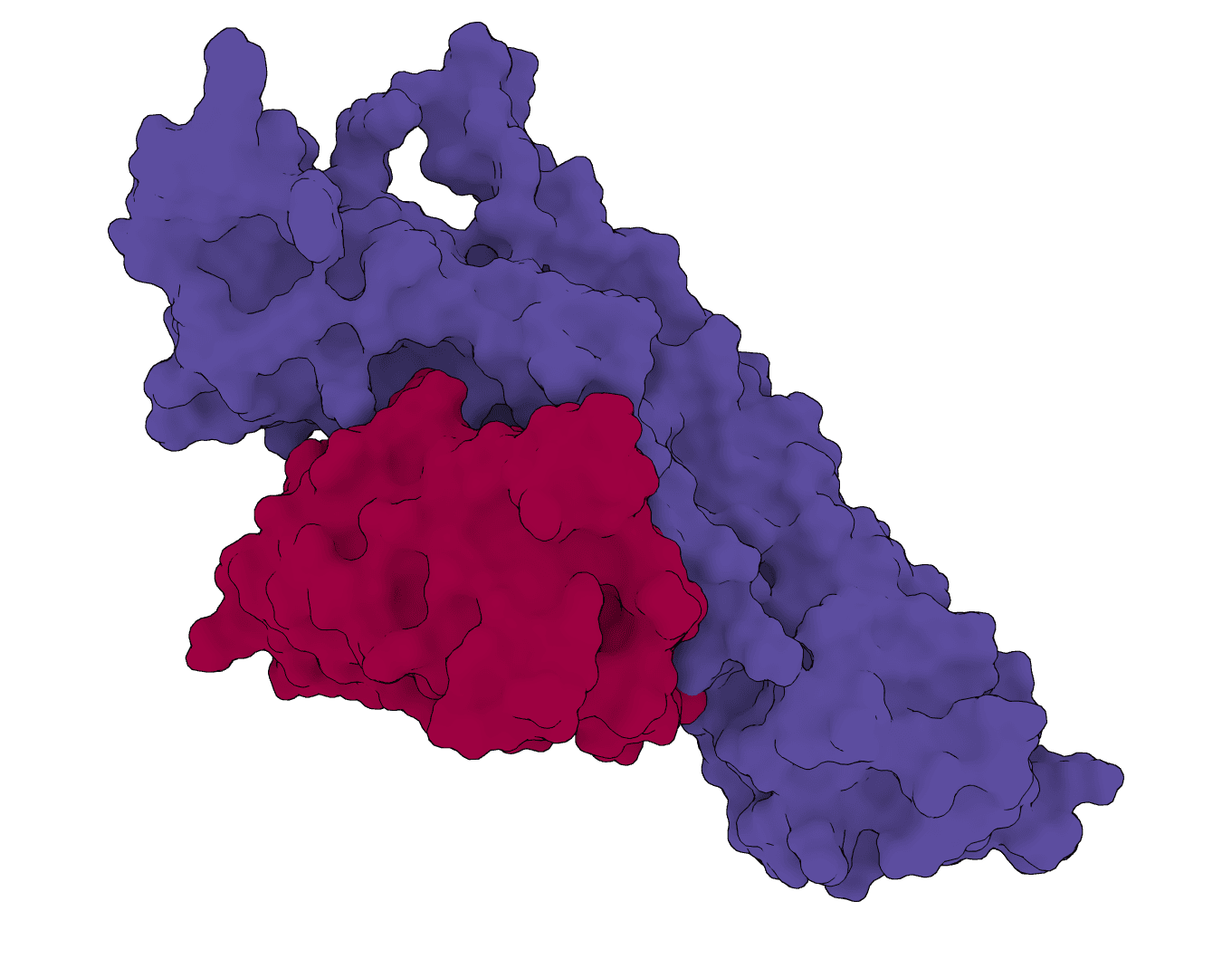

Structure-based de novo antibody and nanobody design pipeline combining antibody-tuned RFdiffusion, ProteinMPNN sequence design, and antibody-tuned RoseTTAFold2 filtering.

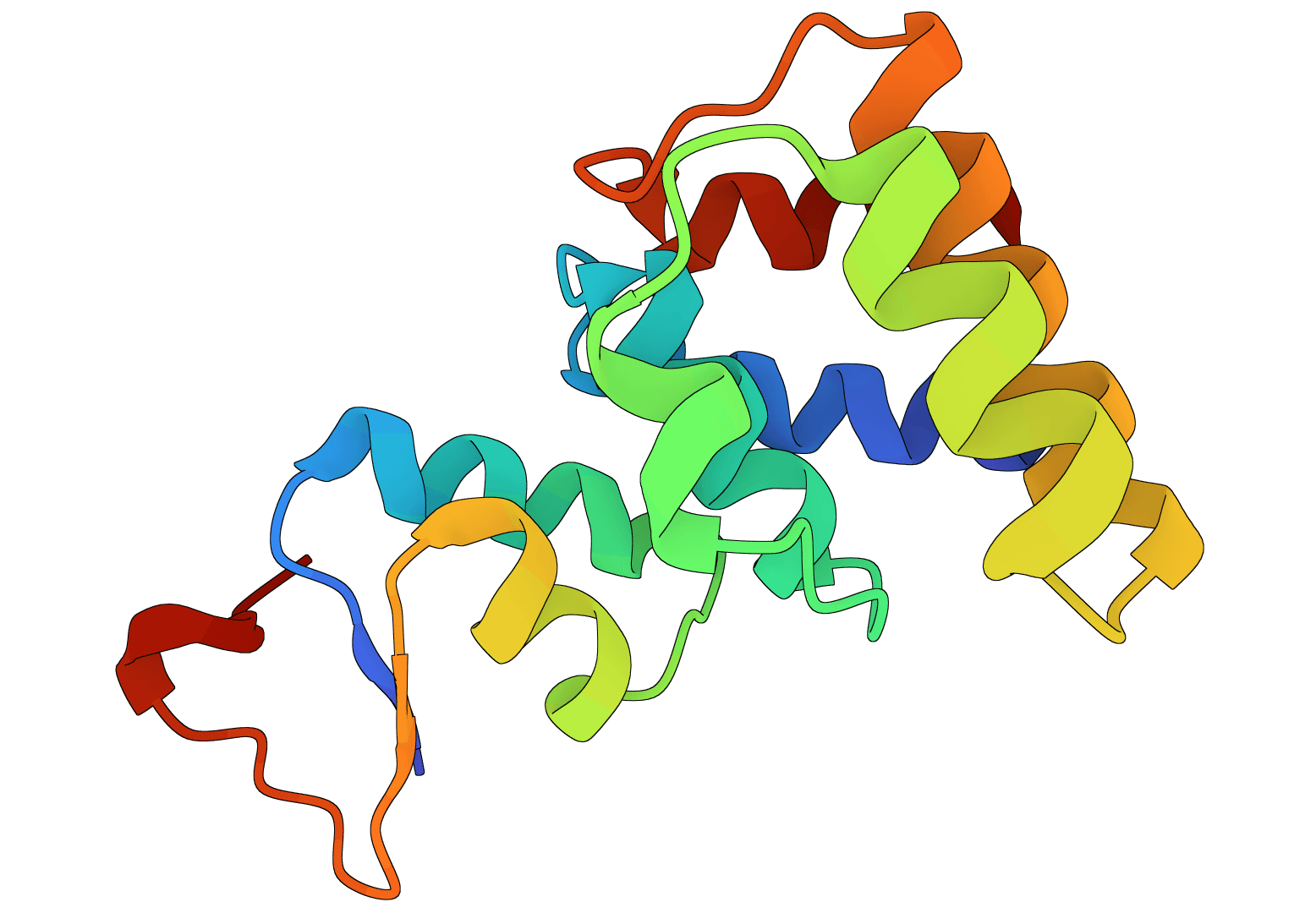

What is ProGen2?

ProGen2 is a family of autoregressive protein language models from Salesforce Research, trained to generate novel amino acid sequences by learning the statistical patterns of natural and metagenomic proteins. The family spans four sizes, from 151M to 6.4B parameters, and includes a domain-specific variant trained exclusively on antibody sequences.

Generation works by sampling from the model's learned distribution over protein sequence space, conditioned on an optional context string. Unlike structure-based design tools, ProGen2 operates entirely in sequence space. No input structure is required, and the model can generate proteins from scratch using only a starting token.

How to use ProGen2 online

ProGen2 on ProteinIQ runs on cloud GPU infrastructure, so no local installation, checkpoint downloads, or Python environment is needed. Enter an optional context string (or leave the default 1 for unconditioned generation), configure the model size and sampling parameters, and receive generated sequences in FASTA format.

Inputs

| Input | Description |

|---|---|

Context / prompt | Starting token(s) for generation. Default 1 for general proteins; see Context and control tokens below. |

Settings

Model settings

| Setting | Description |

|---|---|

Model | Checkpoint to use. Larger models produce higher-quality sequences but are slower. See Choosing a model. |

Random seed | Seeds the random number generator for reproducible runs. Change to explore different outputs with identical settings. |

Number of sequences | How many sequences to generate per run (1-50). |

Sampling settings

| Setting | Description |

|---|---|

Top-p | Nucleus sampling threshold (0.01-1.0, default 0.95). Restricts each sampling step to the smallest set of tokens whose cumulative probability reaches this value. Lower values constrain outputs to more probable amino acids. |

Temperature | Scales the probability distribution before sampling (0.01-2.0, default 0.2). Lower values produce more conservative, natural-looking sequences; higher values increase diversity at the cost of coherence. |

Max generated length | Maximum number of tokens the model generates (1-2048, default 256). Includes the context tokens. The model may stop earlier if it predicts a terminal token. |

Output

Generated sequences are returned as a FASTA file. Each sequence header includes the model name and generated length. A JSON detail file with per-sequence metadata is also available for download.

Context and control tokens

The context string is the seed passed to the tokenizer before generation begins. It conditions each subsequent token prediction on everything that came before, so it acts both as a prompt and a partial sequence prefix.

The default context 1 is a control token from the training data format. During training, sequences from UniRef90 and BFD databases were prefixed with 1, while metagenomic sequences were prefixed with 2. Providing 1 as context tells the model to generate in the style of UniRef/BFD proteins; providing 2 biases toward metagenomic-style sequences. These tokens are stripped from the output before display, so what the user sees is clean amino acid sequence.

Context can also be a partial protein sequence. Prefixing with 1MKTLL generates continuations from that N-terminal fragment, which is useful for extending sequences in a particular evolutionary neighborhood. For progen2-oas, context from the antibody-framework regions (e.g., heavy-chain variable region prefix) guides generation toward biologically plausible antibody sequences.

Choosing a model

| Model | Parameters | Training data | Best for |

|---|---|---|---|

progen2-small | 151M | UniRef90 + BFD30 | Fast iteration, high-throughput exploration |

progen2-medium | 764M | UniRef90 + BFD30 | Balanced quality/speed |

progen2-base | 764M | UniRef90 + BFD30 | Same as medium (different fine-tuning) |

progen2-large | 2.7B | UniRef90 + BFD30 | Default; good quality with reasonable runtime |

progen2-BFD90 | 2.7B | BFD90 | Broader sequence diversity, metagenomic coverage |

progen2-xlarge | 6.4B | UniRef90 + BFD30 | Highest quality; significantly slower |

progen2-oas | 764M | Observed Antibody Space | Antibody and nanobody sequence generation |

For most general protein generation tasks, progen2-large (the default) is a reasonable starting point. If the goal is antibody sequence generation, progen2-oas is the appropriate choice because it was trained on immune repertoire data from the Observed Antibody Space (OAS) database rather than general protein databases.

Sampling parameters in practice

Temperature and top-p both control how conservatively the model samples at each position. At the paper's default settings (t=0.2, p=0.95), ProGen2 tends to produce sequences that resemble natural proteins in amino acid composition and secondary structure propensity. Raising temperature above 0.5 increases sequence diversity but can produce unusual amino acid patterns; temperatures above 1.0 are rarely useful for most design applications.

Generating multiple sequences at different random seeds, rather than adjusting temperature, is often a better way to explore sequence space while maintaining output quality.

ProGen2 vs alternative sequence design tools

ProGen2 generates sequences from nothing but a statistical model of protein sequence space. This makes it distinct from several related approaches:

- RFdiffusion and EvoDiff can condition generation on structural motifs or scaffold constraints. ProGen2 cannot: it has no structural awareness.

- ProteinMPNN solves the inverse folding problem (given a backbone structure, design sequences that fold into it). ProGen2 works without any structure input.

- RFantibody and IgDesign are purpose-built for antibody design with structural context. For antibody-like sequences without a structural constraint,

progen2-oasis an alternative starting point.

The practical advantage of ProGen2 is simplicity: no receptor structure, no scaffold, no MSA. The limitation is the same: generated sequences are sampled from a distribution and have no guarantee of folding into a functional structure without downstream validation.