Related tools

AbLang-2

Antibody-specific language model for predicting non-germline residues (NGL) in antibody sequences. AbLang-2 addresses germline bias in existing antibody language models by focusing on somatic hypermutation patterns, enabling more accurate prediction of amino acid likelihoods and generation of context-aware embeddings for antibody sequences.

ESM-2

ESM-2 is a 650M parameter protein language model from Meta AI trained on 250M protein sequences. Generate rich sequence representations for downstream tasks like structure prediction, function annotation, and variant effect prediction.

Chou-Fasman

Predict protein secondary structure using the classic Chou-Fasman algorithm based on amino acid propensities

DR-BERT

DR-BERT is a compact protein language model that predicts intrinsically disordered regions (IDRs) in proteins. It outputs per-residue disorder probability scores (0–1) from amino acid sequences, enabling fast and accurate annotation of disordered regions without structural data.

RNAcofold

RNAcofold predicts the joint secondary structure of two interacting RNA molecules and optionally reports partition-function and concentration-dependent equilibrium metrics.

RNAdos

RNAdos calculates density-of-states summaries for RNA sequences, reporting representative structures and state counts across energy bands.

RNAeval

RNAeval calculates the free energy of an RNA secondary structure for a given sequence. Evaluates if a proposed structure is thermodynamically favorable.

RNAfold

RNAfold predicts RNA secondary structure using minimum free energy (MFE) algorithms and optionally returns partition-function ensemble metrics when explicitly enabled.

RNALfold

RNALfold reports locally stable RNA secondary structures within a sliding window and returns their start and end positions on the input sequence.

RNAplfold

RNAplfold computes local base pair probabilities using a sliding window approach. Useful for analyzing accessibility and identifying binding sites in long RNA sequences.

What is ProstT5?

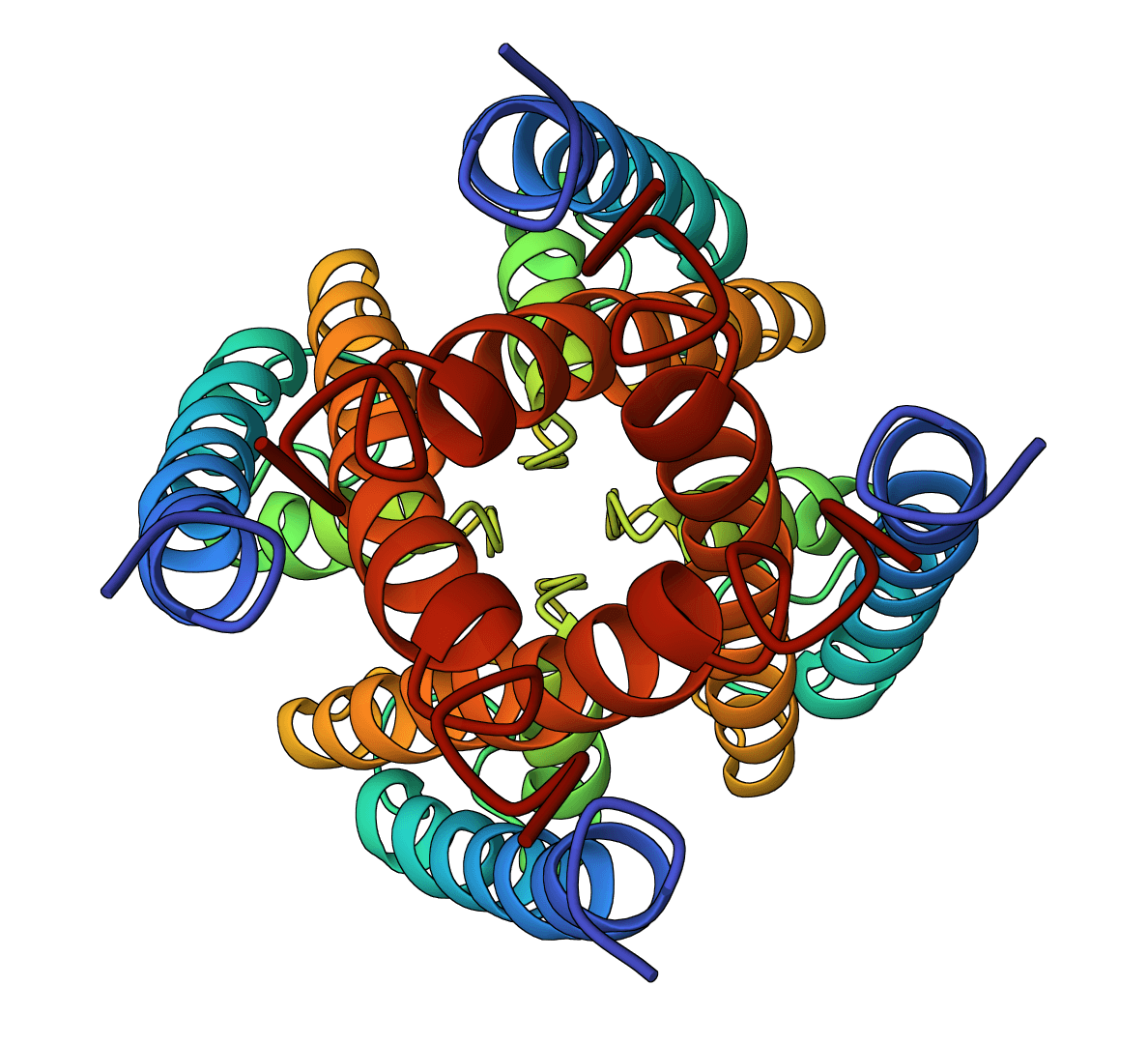

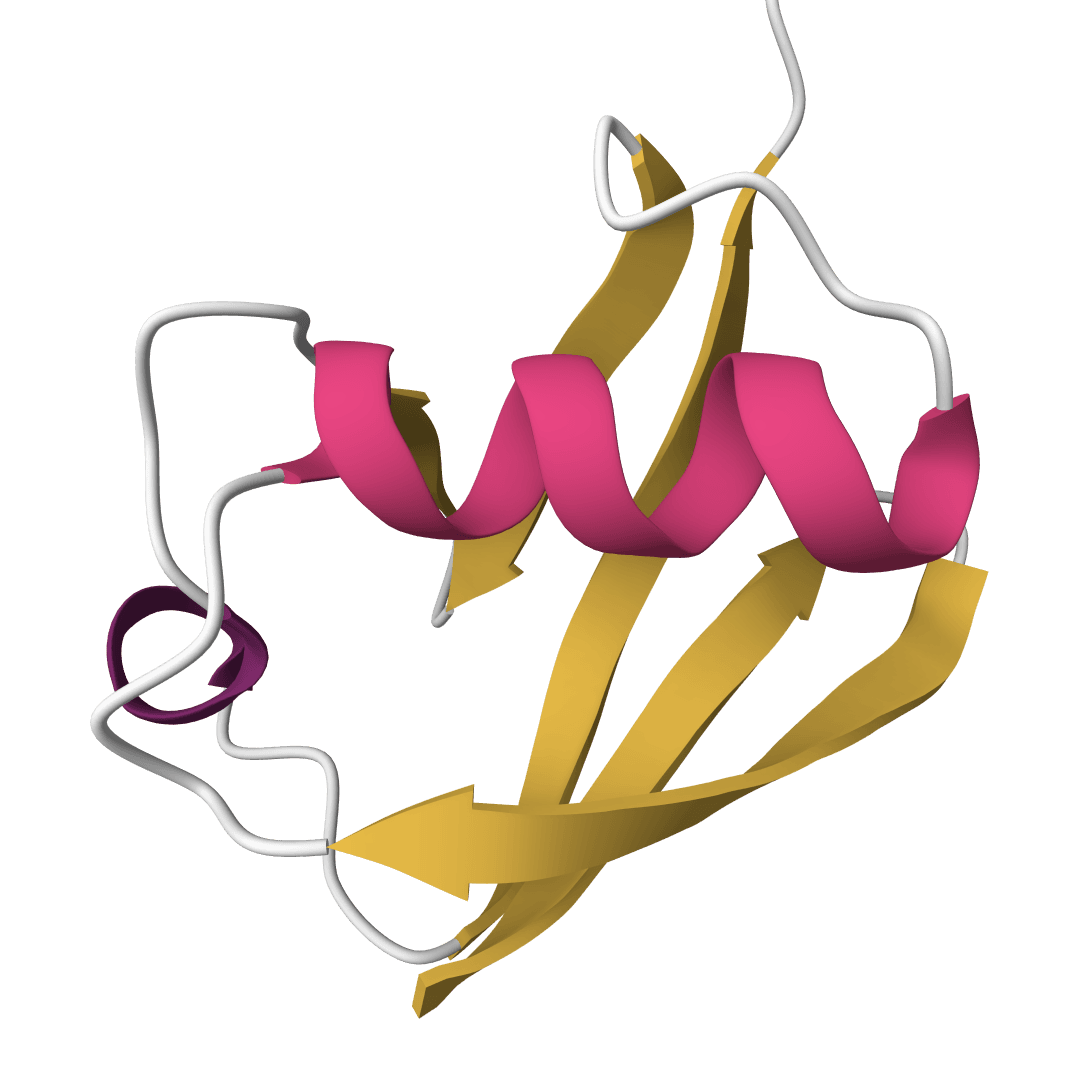

ProstT5 is a bilingual protein language model that translates between amino acid sequences and 3Di structural tokens, a compact structural alphabet used by FoldSeek. Instead of predicting full atomic coordinates, ProstT5 represents local protein geometry as a sequence of 20 lowercase letters. That makes structural information available to sequence-style workflows such as fast fold search, inverse folding, and embedding extraction.

The model is based on ProtT5-XL-U50 and was fine-tuned by the Rostlab team on 17 million AlphaFold Database structures. Its output should be treated as a structural-token prediction, not as a replacement for a coordinate-level model such as AlphaFold2.

How to use ProstT5 online

ProteinIQ runs ProstT5 online for sequence-to-structure-token translation, inverse folding from 3Di strings, and embedding extraction. Jobs accept FASTA, raw amino acid sequences, raw 3Di tokens, or RCSB PDB IDs, then return generated FASTA files, generation settings, per-sequence text outputs, or HDF5 embeddings.

Inputs

| Input | Accepted format | Notes |

|---|---|---|

Protein sequence | FASTA or raw amino acid sequence | Whitespace and gap characters are removed. Rare or ambiguous residues U, Z, O, and B are converted to X, matching upstream preprocessing. |

3Di tokens | Raw lowercase 3Di string or FASTA | Used with 3Di to Sequence. Tokens are normalized to lowercase after whitespace and gap removal. |

PDB ID | Four-character RCSB ID beginning with a digit, for example 1UBQ | ProteinIQ fetches FASTA sequence data from RCSB. Short amino acid sequences such as ACDE are treated as sequences, not as PDB IDs. |

Multi-record FASTA input is supported. Output identifiers are sanitized before being used as filenames or HDF5 dataset names.

Settings

| Setting | Default | Description |

|---|---|---|

Translation mode | Sequence to 3Di | Selects the task. Sequence to 3Di predicts lowercase 3Di tokens from amino acids. 3Di to Sequence generates uppercase amino acid sequences from 3Di tokens. Extract embeddings returns ProstT5 encoder representations. |

Use half precision (FP16) | Enabled | Runs the model in FP16 on GPU for faster inference. Disabling this setting uses FP32 for maximum numerical precision. |

Mean-pool embeddings per protein | Disabled | Embeddings mode only. Disabled returns one 1024-dimensional vector per residue. Enabled averages residues into one 1024-dimensional vector per input sequence. |

Results

| Mode | Primary output | Additional files | Interpretation |

|---|---|---|---|

Sequence to 3Di | generated_sequences.fasta | gen_config.json and one sanitized .txt file per input sequence | Each output sequence is a lowercase 3Di string with one token per input residue. It can be used as a structural proxy for fold search or downstream modeling. |

3Di to Sequence | generated_sequences.fasta | gen_config.json and one sanitized .txt file per input sequence | Each output sequence is an amino acid sequence compatible with the input 3Di pattern. This is inverse folding from a compressed structural alphabet, not atom-level backbone design. |

Extract embeddings | embedding_summary.txt | prostt5_embeddings.h5 | The HDF5 file contains float32 embeddings. Per-residue mode stores an L x 1024 dataset per sequence. Mean-pooled mode stores a single 1024 vector per sequence. |

gen_config.json records the generation parameters used by the wrapper. The sequence-to-3Di mode uses the upstream sampling configuration with beam search, top-p sampling, top-k sampling, temperature, and repetition penalty. The inverse direction uses the upstream 3Di-to-amino-acid sampling configuration.

How ProstT5 works

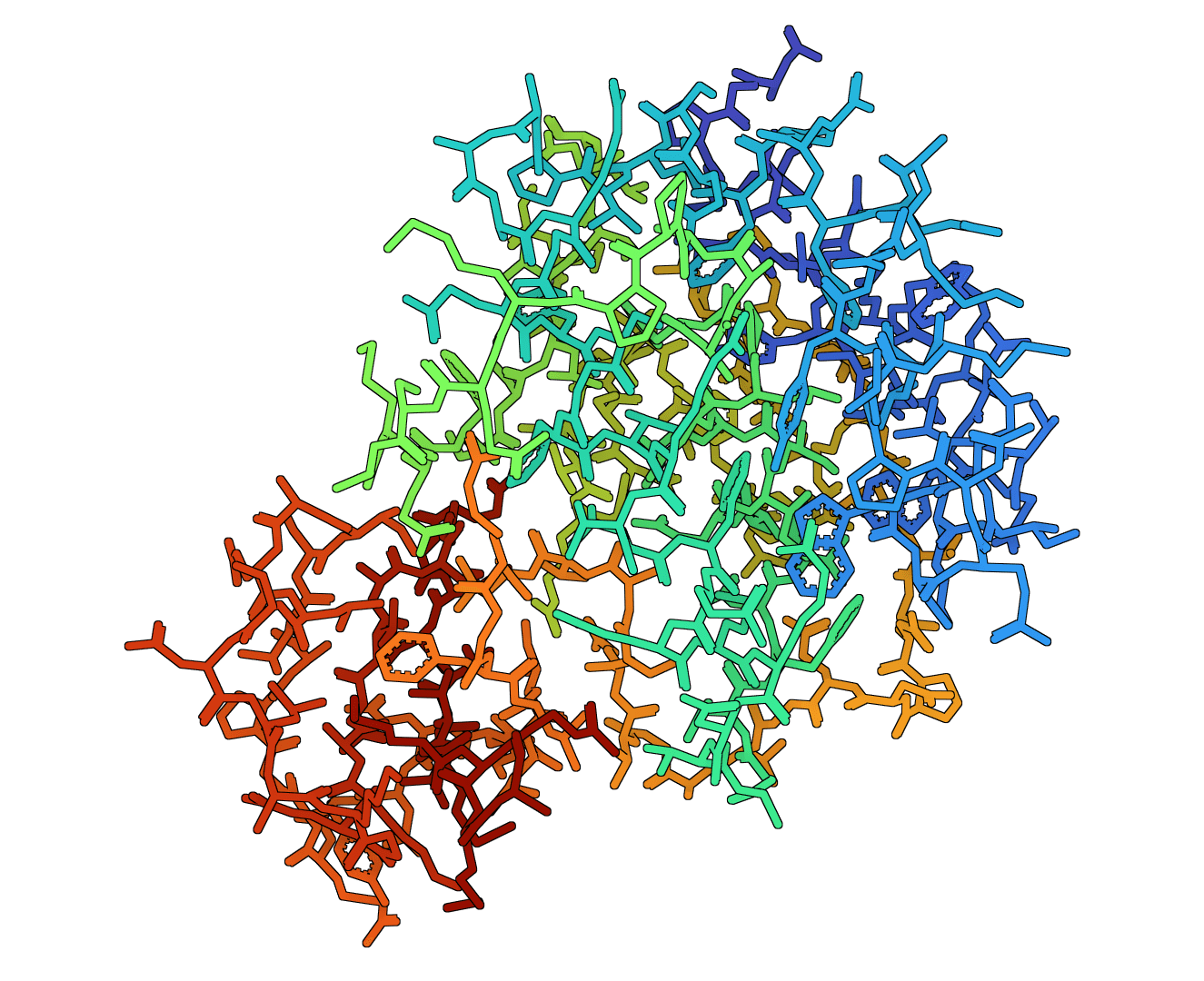

ProstT5 treats amino acid sequences and 3Di strings as two related languages. Amino acid inputs are represented with uppercase residue symbols. 3Di inputs use lowercase structural tokens generated from local residue environments in three-dimensional structures.

Directional prefixes tell the model which language to produce:

<AA2fold>: amino acid sequence to 3Di tokens, or amino acid sequence embeddings<fold2AA>: 3Di tokens to amino acid sequence, or 3Di token embeddings

For translation tasks, the decoder generates an output sequence with the same length as the normalized input. Mode-specific forbidden-token constraints prevent amino acid symbols from appearing in 3Di outputs and prevent 3Di-only symbols from appearing in amino acid outputs. If generation returns a rare length mismatch, the wrapper corrects the output length to preserve the one-token-per-residue contract.

For embeddings, ProteinIQ runs the ProstT5 encoder and stores the hidden representation for each residue token. These embeddings combine sequence and structure-aware information learned during ProstT5 fine-tuning. For broader sequence-only embeddings, ESM-2 is often a better baseline.

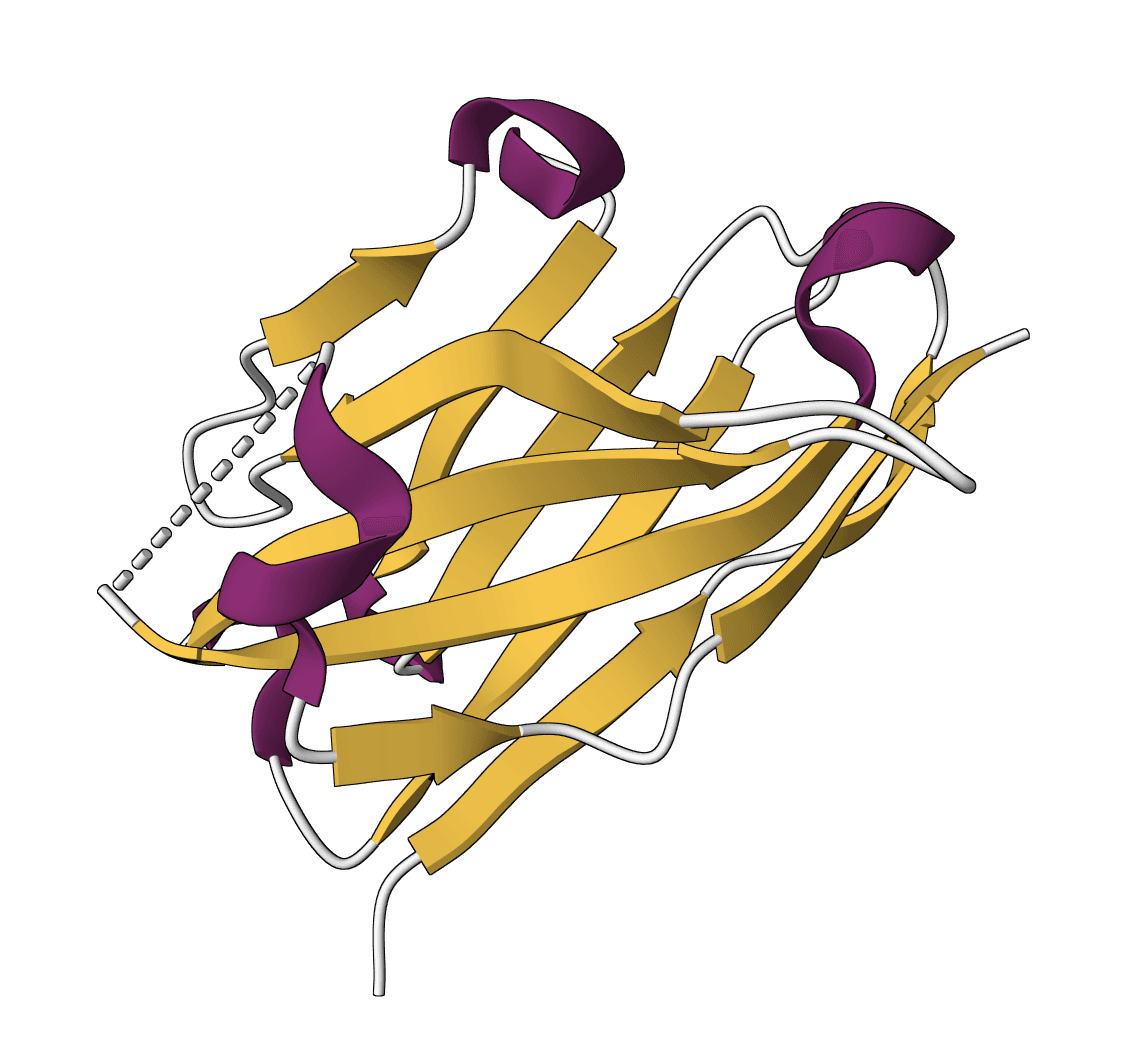

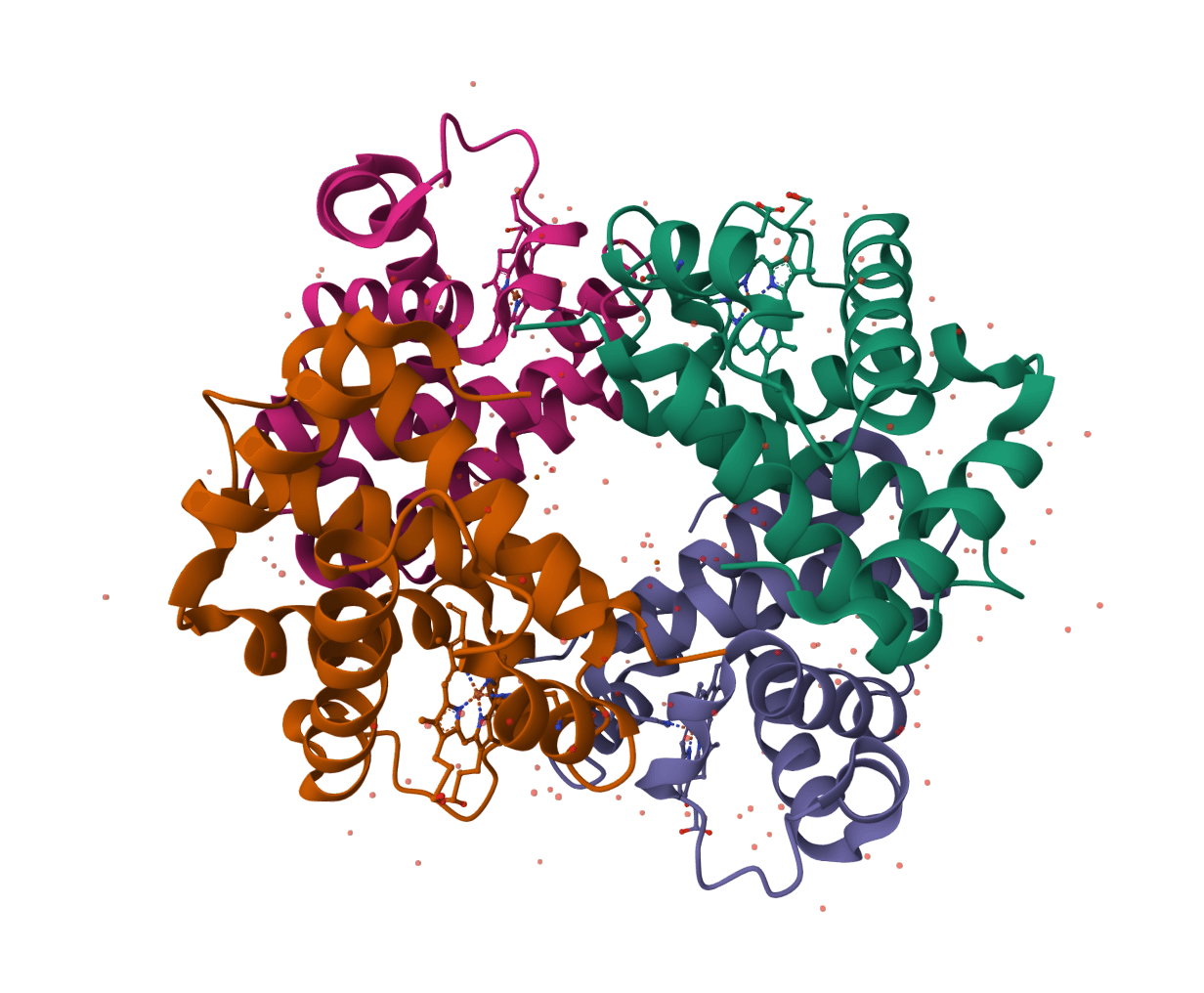

3Di structural tokens

3Di is the structural alphabet introduced by Foldseek. It converts a protein backbone environment into a one-dimensional sequence over 20 token states, making structural similarity searchable with fast sequence-alignment machinery.

The representation is useful because it captures fold-level information without storing atomic coordinates. It is also lossy. Side-chain geometry, ligand contacts, alternate conformations, and detailed interface geometry are not preserved in a 3Di string.

When to use ProstT5 vs alternatives

| Task | Better choice | Why |

|---|---|---|

| Fast structural-token prediction from sequence | ProstT5 | Produces 3Di strings directly without first predicting a full 3D model. |

| Searching for structural homologs | FoldSeek, often with ProstT5-generated 3Di | Foldseek performs the actual structural search. ProstT5 is useful when only sequence is available. |

| Full 3D coordinate prediction | AlphaFold2 | AlphaFold2 predicts atomic coordinates and confidence scores; ProstT5 predicts structural tokens. |

| Protein language-model embeddings | ProstT5 or ESM-2 | ProstT5 embeddings include 3Di-aware training signal. ESM-2 is a strong sequence-only embedding baseline. |

| Inverse folding from a full backbone | ESM-IF1 | ESM-IF1 conditions on 3D backbone coordinates. ProstT5 conditions on compressed 3Di tokens. |

Practical limitations

- 3Di is compressed structure: A 3Di sequence captures local geometry but does not encode full atomic coordinates, side-chain packing, cofactors, or protein-ligand interactions.

- No confidence scores: ProstT5 does not return pLDDT-style confidence values. Generated tokens and sequences should be validated with downstream structural or functional checks.

- Single-chain focus: The model is most appropriate for individual protein chains. Protein complexes, multimer interfaces, and ligand-bound conformations are outside the direct modeling target.

- Very long proteins require caution: Inputs around

>1000residues can be slower and more memory intensive, especially for per-residue embeddings. - Inverse folding is approximate: 3Di-to-sequence mode generates sequences compatible with a structural-token pattern, but the 3Di string does not contain all constraints needed for atomically precise design.