Related tools

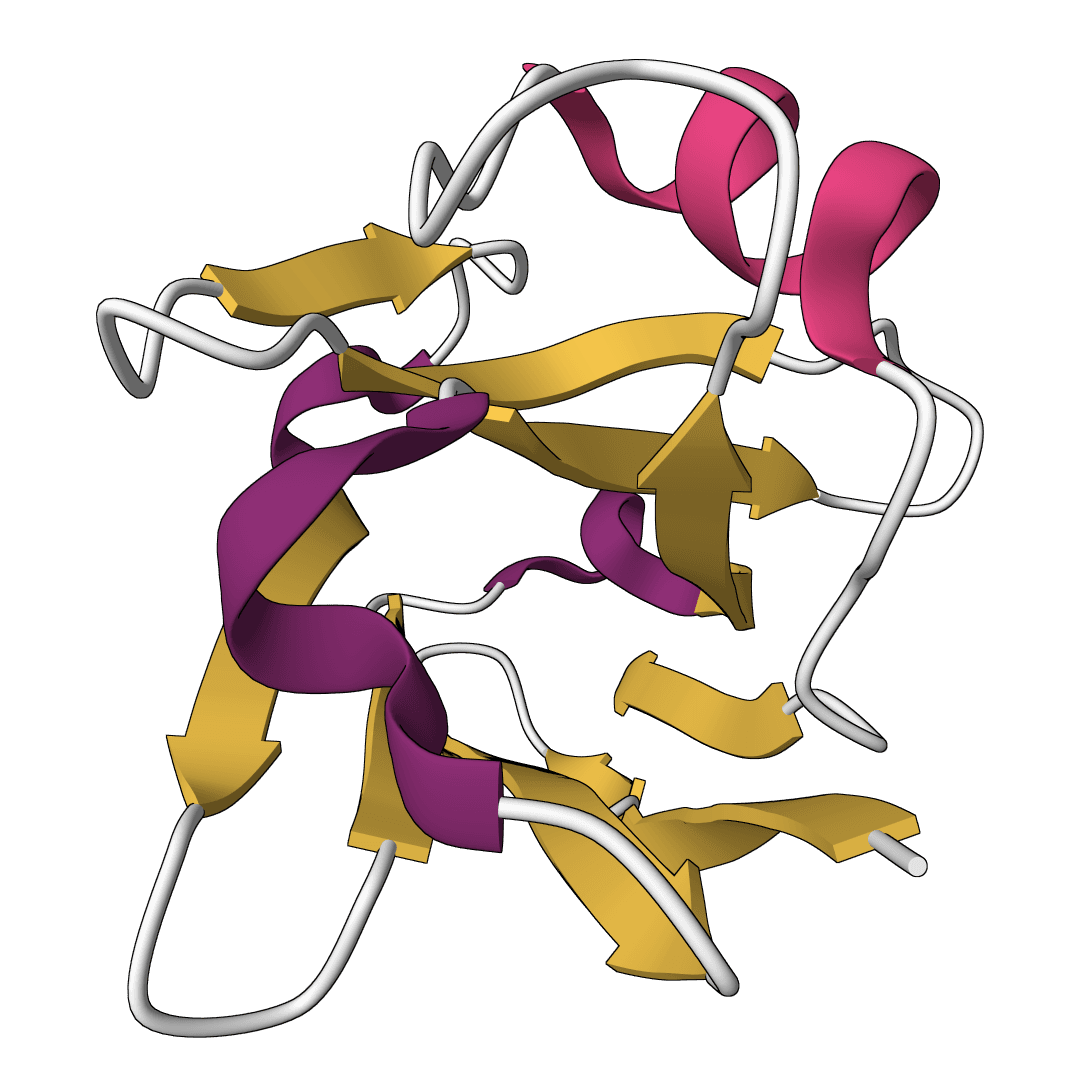

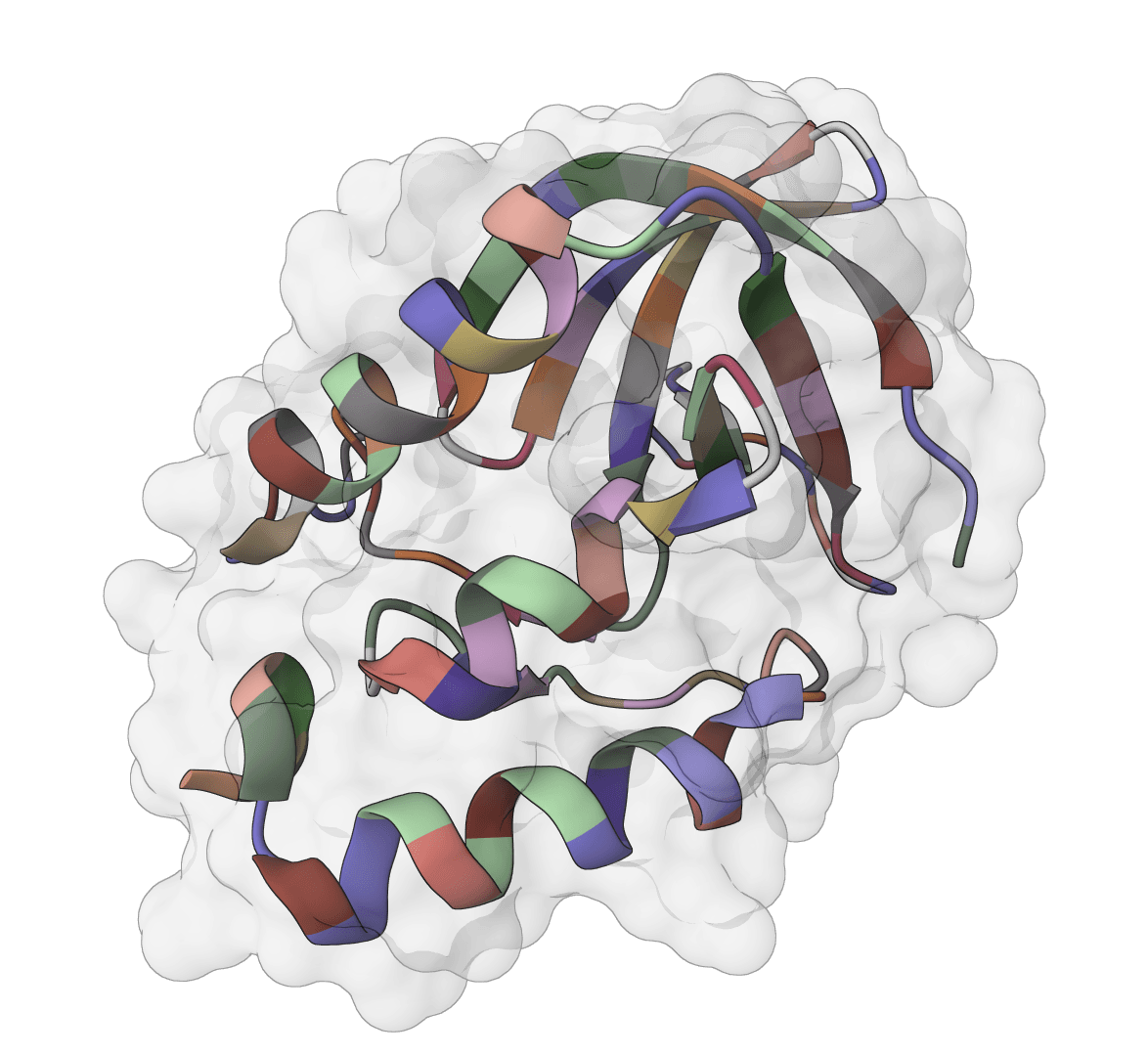

AbLang-2

Antibody-specific language model for predicting non-germline residues (NGL) in antibody sequences. AbLang-2 addresses germline bias in existing antibody language models by focusing on somatic hypermutation patterns, enabling more accurate prediction of amino acid likelihoods and generation of context-aware embeddings for antibody sequences.

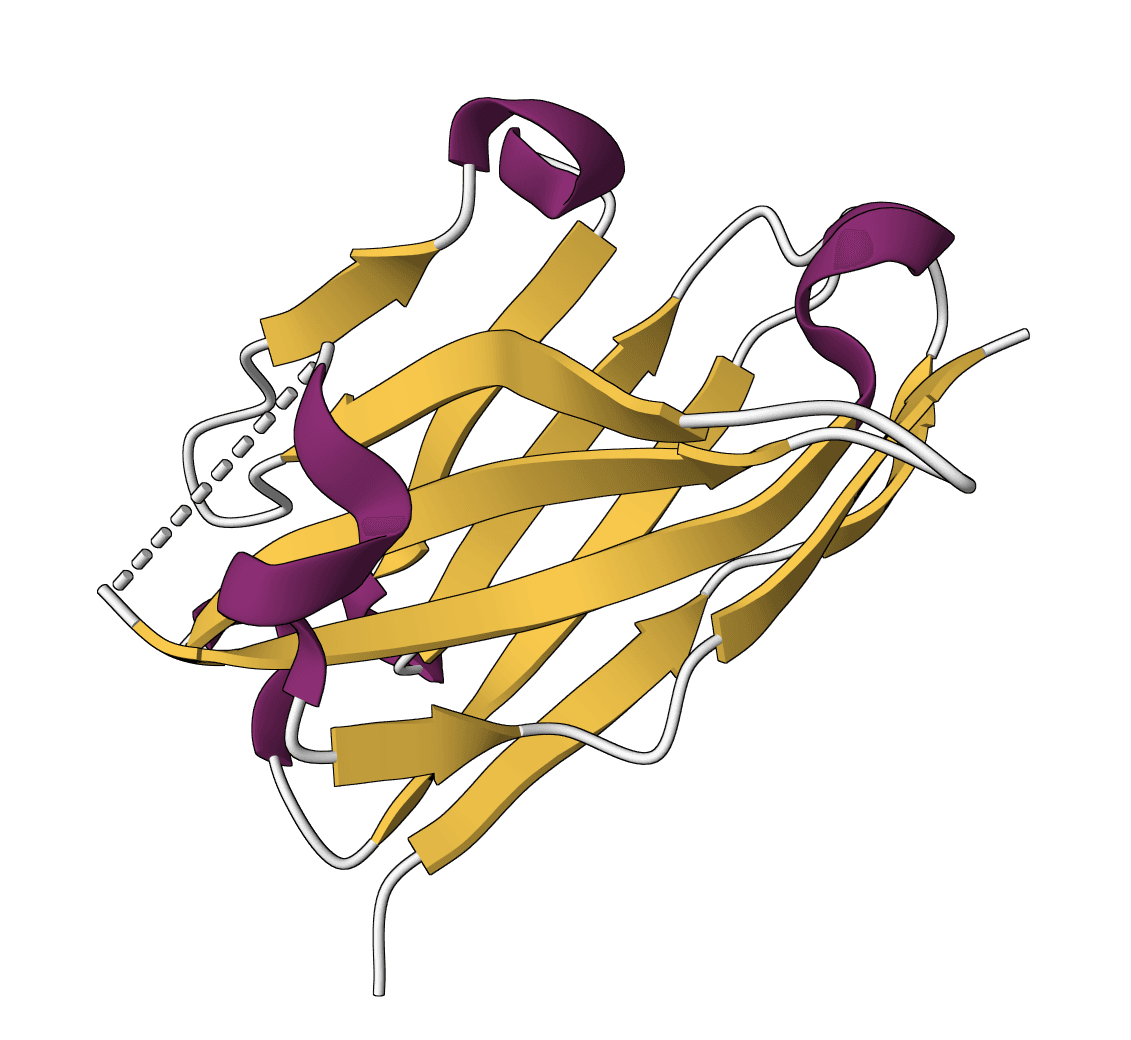

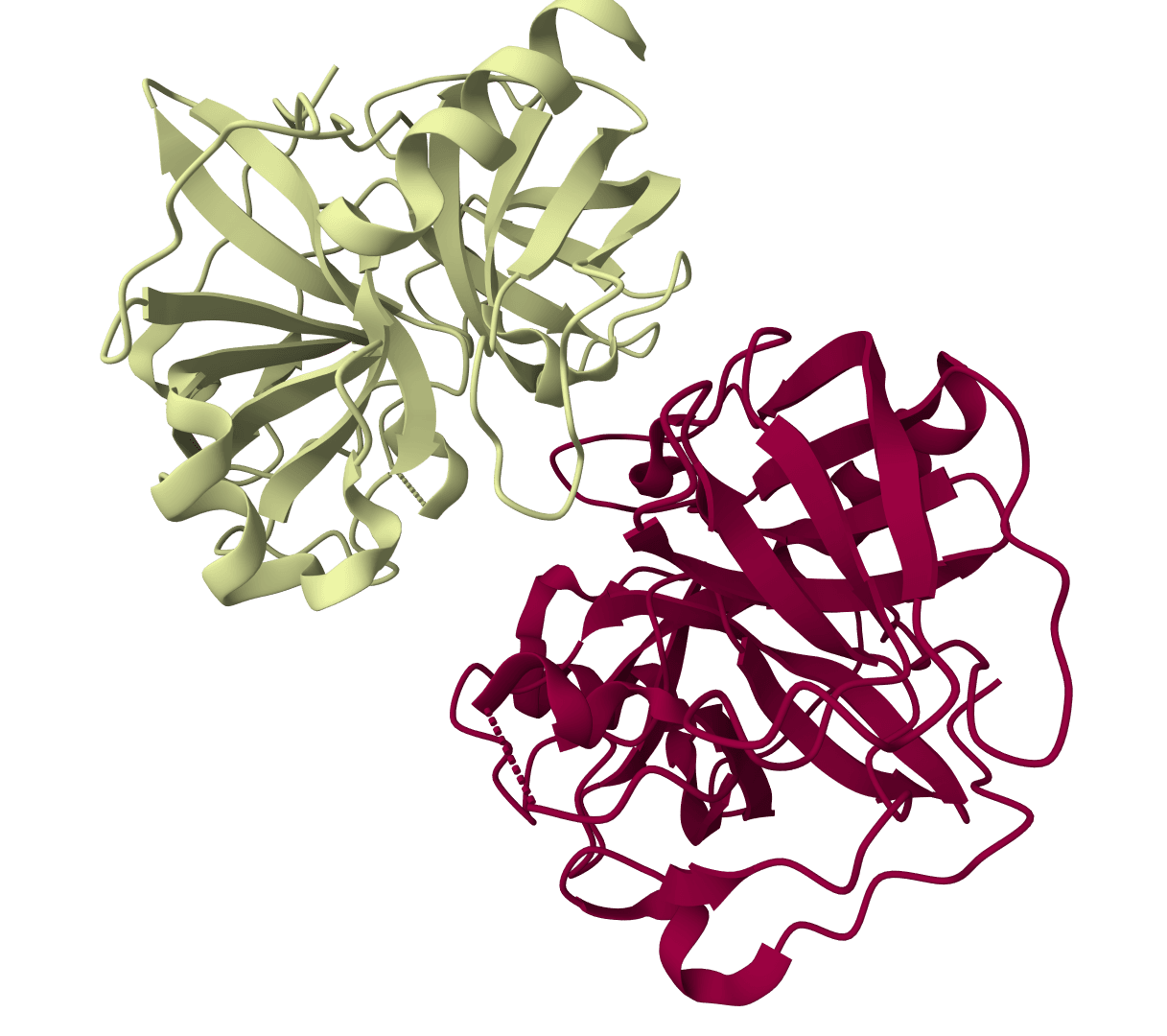

ProstT5

ProstT5 is a protein language model that bidirectionally translates between amino acid sequences and 3Di structural tokens. It enables fast structure-based searches and inverse folding by encoding structural information into a sequence-like representation.

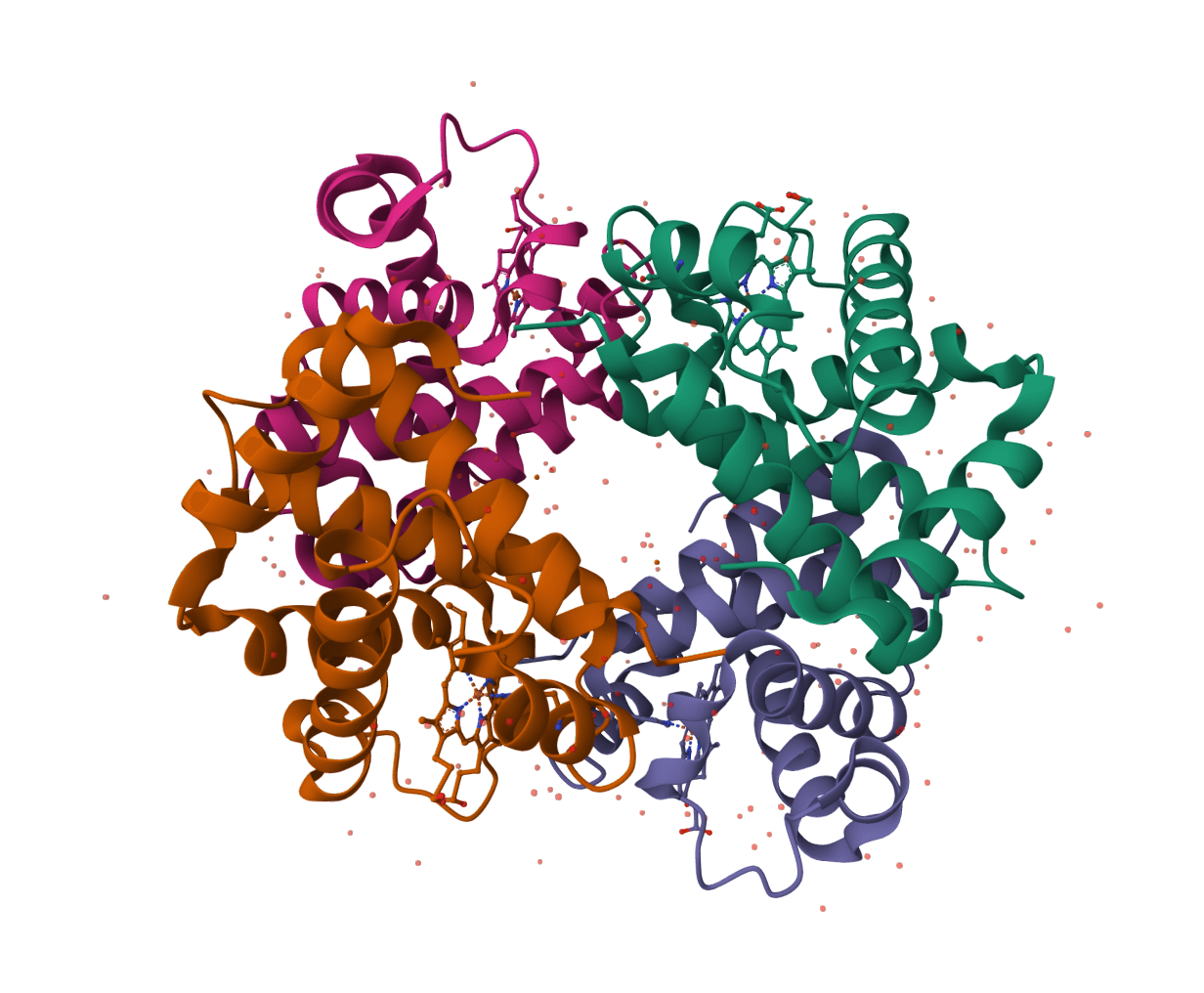

pySCA

Statistical Coupling Analysis for protein families. Identifies co-evolving residue groups (sectors) from multiple sequence alignments using the SCA method from the Ranganathan Lab.

AbLang

Restore missing residues in antibody sequences using a language model trained on the Observed Antibody Space (OAS) database. Achieves better restoration than IMGT germlines or ESM-1b while being 7x faster.

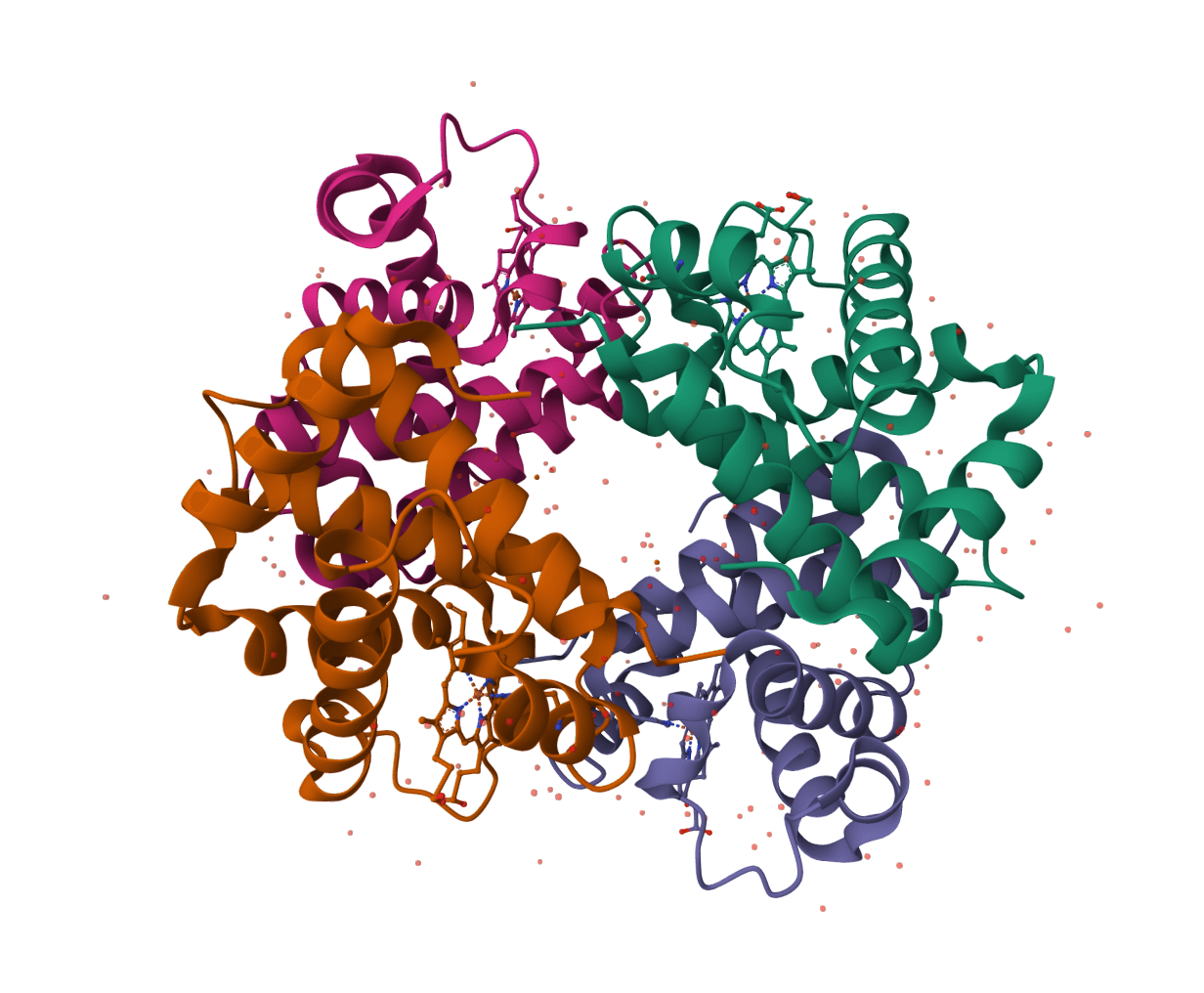

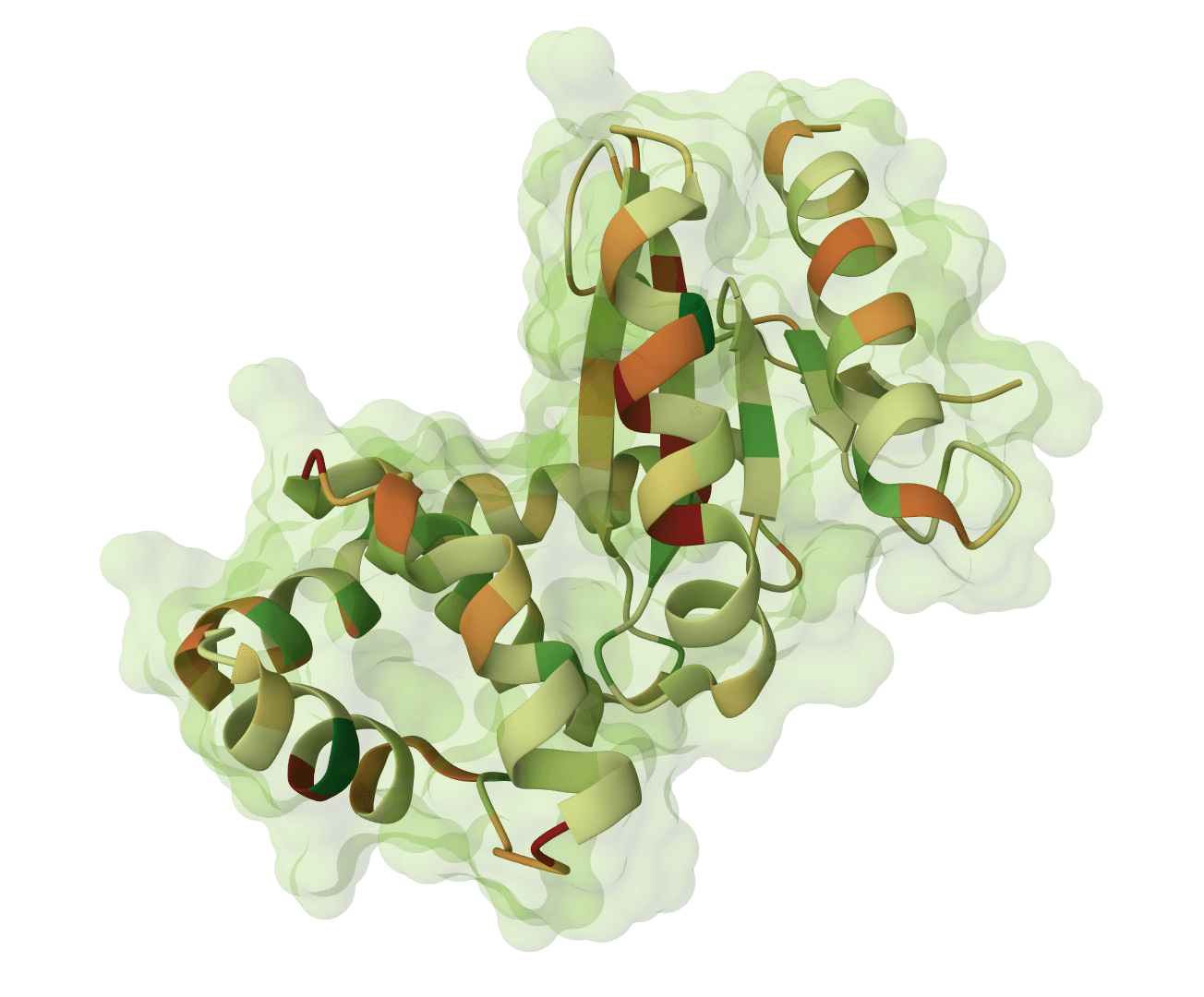

ThermoMPNN

Predict protein thermostability changes (ΔΔG) for point mutations using a graph neural network. Enables computational saturation mutagenesis screening to identify stabilizing mutations.

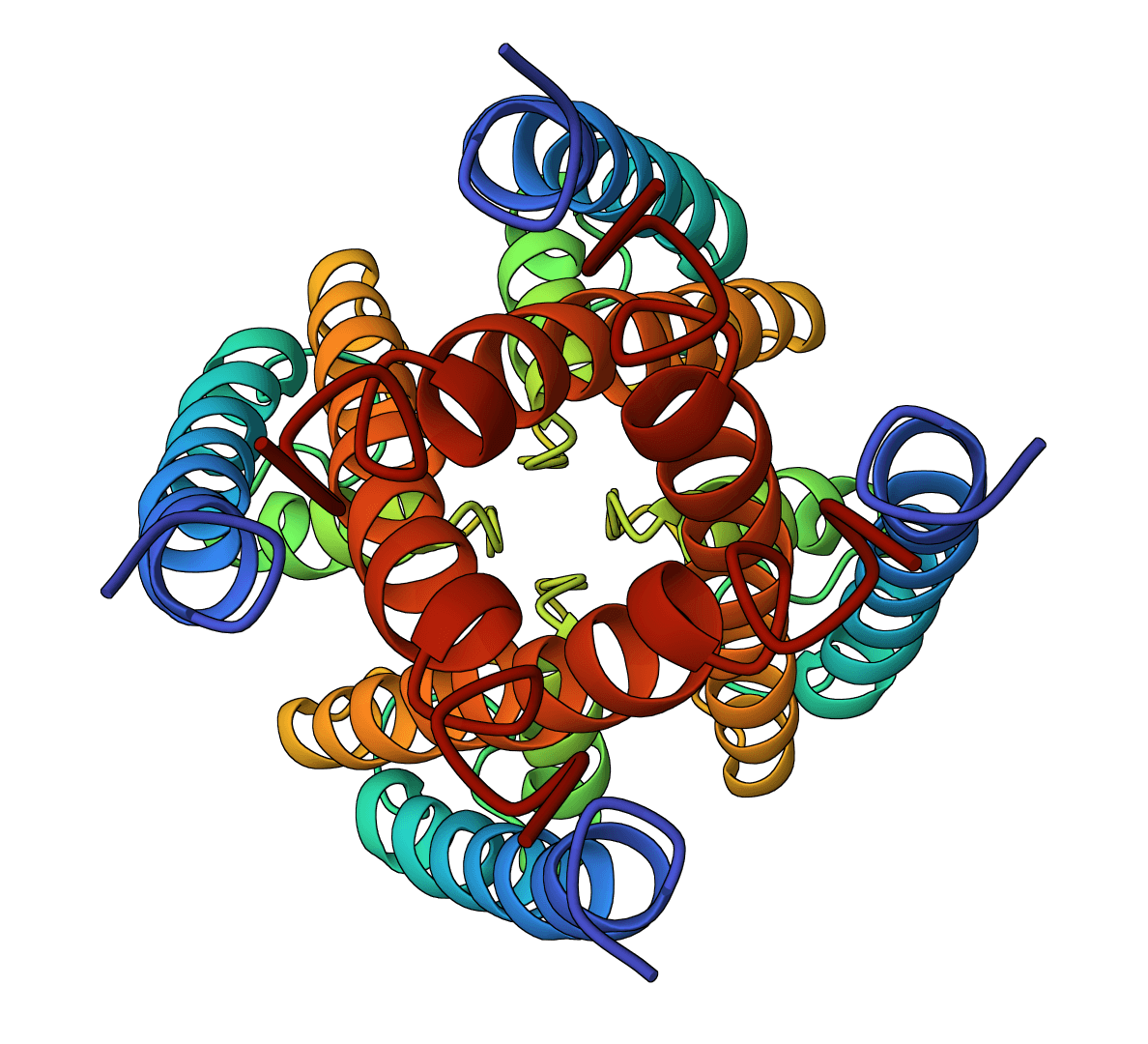

AF-Cluster

Cluster Multiple Sequence Alignments to predict alternative protein conformations with AlphaFold2. Uses DBSCAN clustering to identify sequence subgroups.

DR-BERT

DR-BERT is a compact protein language model that predicts intrinsically disordered regions (IDRs) in proteins. It outputs per-residue disorder probability scores (0–1) from amino acid sequences, enabling fast and accurate annotation of disordered regions without structural data.

IPC 2.0 (isoelectric point calculator)

Isoelectric Point Calculator 2.0 - Predict protein/peptide isoelectric point (pI) using 18+ validated pKa scales, SVR models, and deep learning. Supports proteins, peptides, and comprehensive analysis.

MSA Viewer

Interactive viewer for multiple sequence alignments with color-coded residues and consensus sequence

ORF Finder

Find all Open Reading Frames (ORFs) in DNA sequences. Searches all six reading frames and supports multiple genetic codes.